Debugging & Troubleshooting

This guide covers common issues and their solutions when deploying and running the Zynomi platform.

Common Issues

Authentication Issues

Problem: Users cannot log in

Symptoms:

- Login fails with "Invalid credentials"

- Session expires immediately

Solutions:

- Verify Supabase configuration:

# Check environment variables

echo $NEXT_PUBLIC_SUPABASE_URL

echo $NEXT_PUBLIC_SUPABASE_ANON_KEY

-

Check Supabase dashboard for authentication logs

-

Verify RLS policies are correctly configured

Problem: JWT token expired

Solution:

- Ensure refresh token flow is implemented

- Check token expiration settings in Supabase

Database Connection Issues

Problem: Cannot connect to database

Symptoms:

- "Connection refused" errors

- Timeout on database queries

Solutions:

- Verify database URL format:

postgresql://<DB_USER>:<DB_PASSWORD>@<DB_HOST>:<DB_PORT>/<DB_NAME>

-

Check network/firewall settings

-

Verify Supabase project is active

API Gateway Issues

Problem: KrakenD returns 502 Bad Gateway

Solutions:

- Check backend service health:

curl https://your-site.frappe.cloud/api/method/ping

- Verify KrakenD configuration:

krakend check -c krakend.json

- Review KrakenD logs:

fly logs -a your-krakend-app

DNS Resolution & API URL Issues

Problem: Login returns 404 or "User profile not found in Frappe"

Symptoms:

POST /api/loginreturns 404- Error message: "User profile not found in Frappe"

- Browser DevTools shows the request reaching the server but the Frappe profile lookup fails

Root Cause:

The Zynexa Next.js container uses two separate API URLs:

| Variable | Used By | Purpose |

|---|---|---|

RUNTIME_API_BASE_URL | Server-side (Node.js) | Login handler, SSR data fetching |

RUNTIME_API_CLIENT_URL | Client-side (Browser JS) | Permission grid, RBAC lookups |

In Docker, the server-side URL must resolve from inside the container. If it points to an external hostname (e.g., ctms.example.com) that the container cannot resolve via DNS, all server-side Frappe calls will fail.

Solutions:

- Set the server-side URL to the container's own localhost:

# .env file

NEXT_PUBLIC_API_BASE_URL=http://localhost:3000/api/v1

- Or add the hostname to Docker's

extra_hostsso the container can resolve it:

# docker-compose.yml

services:

zynexa:

extra_hosts:

- "ctms.example.com:host-gateway"

- Verify resolution from inside the container:

docker exec ctms-zynexa sh -c "wget -q -O- http://localhost:3000/api/health"

Problem: Permissions page shows only 20 resources instead of 24

Symptoms:

- Permissions management page at

/management/permissionsloads but shows incomplete data - Only 20 resources visible, checkboxes unchecked for some permission groups

- Browser DevTools shows API calls succeeding with 200 status

Root Cause:

The generic /api/v1/doctype/[entity] route handler was reading the query parameter page_length but the frontend sends limit_page_length (Frappe's native parameter name). Since the parameter was never matched, Frappe applied its default limit of 20 records.

Solution:

This was fixed in commit 893a612 — the route handler now reads limit_page_length first, with page_length as fallback. Ensure your Docker image is up to date:

docker pull --platform linux/amd64 zynomi/zynexa:latest

docker compose --env-file .env.production up -d zynexa

Problem: Browser API calls go to wrong domain (cross-origin)

Symptoms:

- Browsing at

https://zynexa.localhostbut network tab shows requests tohttps://ctms.example.com - SSL certificate errors or CORS failures

- "Provisional headers shown" in DevTools

Root Cause:

RUNTIME_API_CLIENT_URL (the browser-side API URL) points to a different domain than the one you're browsing. The browser makes cross-origin requests which may fail due to self-signed certificates or CORS.

Solution:

Set NEXT_PUBLIC_API_CLIENT_URL to match your browsing domain:

# If browsing at https://zynexa.localhost

NEXT_PUBLIC_API_CLIENT_URL=https://zynexa.localhost/api/v1

# If browsing at https://ctms.example.com

NEXT_PUBLIC_API_CLIENT_URL=https://ctms.example.com/api/v1

Then recreate the container:

docker compose --env-file .env.production up -d zynexa

Deployment Issues

Problem: PLACEHOLDER_WILL_BE_PATCHED appears in runtime URLs

Symptoms:

- Browser shows API calls to

http://placeholder_will_be_patched:9080/api/v1/... - Zynexa container's

RUNTIME_*env vars containPLACEHOLDER_WILL_BE_PATCHEDinstead of the server IP

Root Cause:

EC2_PUBLIC_IP=PLACEHOLDER_WILL_BE_PATCHED was never patched in .env.production on the server. This typically happens when someone runs git reset --hard origin/main on the server, which overwrites the patched .env.production with the template.

Solutions:

- Re-patch the env file and recreate containers:

cd /opt/ctms-deployment

sed -i "s|PLACEHOLDER_WILL_BE_PATCHED|YOUR_SERVER_IP|g" .env.production .env

docker compose -f docker-compose.yml -f docker-compose.prod.yml --env-file .env.production up -d --force-recreate

- Prevention: Always use

./install.sh updateinstead of manualgit reset --hard— it re-patches all environment variables automatically after copying the template.

Problem: Frappe AuthenticationError on user signup

Symptoms:

- Zynexa logs show

frappe.exceptions.AuthenticationError - User creation via the web app fails

Root Cause:

FRAPPE_API_TOKEN is still the template placeholder (PLACEHOLDER:WILL_BE_REPLACED_AFTER_FRAPPE_SETUP) or is an invalid token.

Solutions:

- Generate a fresh Frappe API token:

docker exec frappe-marley-health-backend-1 bench execute \

frappe.core.doctype.user.user.generate_keys --args "['Administrator']"

- Verify it works:

curl -s -H "Authorization: token <api_key>:<api_secret>" \

http://localhost:8080/api/method/frappe.auth.get_logged_user

- Patch into env files and recreate:

sed -i 's|FRAPPE_API_TOKEN=.*|FRAPPE_API_TOKEN="<api_key>:<api_secret>"|' .env.production .env

docker compose -f docker-compose.yml -f docker-compose.prod.yml --env-file .env.production up -d --force-recreate api-gateway zynexa

Problem: Frappe API returns 401 AuthenticationError after service restart

Symptoms:

- All Frappe-proxied endpoints via KrakenD return

401 AuthenticationError - The

api_keyin Frappe'stabUsertable has not changed .env.productionstill has the sameFRAPPE_API_TOKEN- Error was not present before restarting Frappe services

Root Cause:

When Frappe Docker services are restarted (e.g., docker compose restart on the Frappe stack), the frappe-marley-health-setup-1 init container may re-run and call generate_keys for the Administrator user. This regenerates the api_secret while keeping the same api_key — so the key looks unchanged in the database, but the secret no longer matches what's stored in .env.production.

Additionally, docker compose restart does not re-read .env.production. Even if you update the token in the file, a simple restart will continue using the old value baked into the container's environment.

Diagnosis:

Verify the token is failing:

cd /opt/ctms-deployment

FRAPPE_TOKEN=$(grep "^FRAPPE_API_TOKEN=" .env.production | head -1 | cut -d= -f2-)

curl -s -w "\nHTTP: %{http_code}" \

-H "Authorization: token ${FRAPPE_TOKEN}" \

"http://127.0.0.1:8080/api/method/frappe.auth.get_logged_user"

If you get HTTP 401, the token is stale. Run ./zynctl.sh refresh-token to fix it (see Solution below).

Solution:

Run the built-in token refresh command:

cd /opt/ctms-deployment

./zynctl.sh refresh-token

This will automatically extract the current secret from Frappe, patch .env.production, and force-recreate the API gateway.

Verify the fix:

curl -s -w "\nHTTP: %{http_code}" \

"http://127.0.0.1:9080/api/v1/doctype/Study?limit_page_length=1&fields=[\"name\"]"

restart vs --force-recreate| Command | Re-reads .env.production? | Effect |

|---|---|---|

docker compose restart api-gateway | ❌ No | Stops/starts the same container with stale env vars |

docker compose up -d api-gateway --force-recreate | ✅ Yes | Destroys and recreates with fresh env vars |

Always use --force-recreate when updating environment variables. The refresh-token command does this automatically.

For step-by-step details, see: 👉 Recipe: Fix Frappe API Token After Restart

To prevent the setup container from re-running on Frappe restarts, ensure it has restart: "no" (the default for one-shot init containers) and avoid running docker compose up with the init profile during routine restarts.

Problem: SUPABASE_SERVICE_ROLE_KEY is not configured

Symptoms:

- Zynexa logs show

SUPABASE_SERVICE_ROLE_KEY is not configured - Server-side Supabase operations fail

Root Cause:

The SUPABASE_SERVICE_ROLE_KEY environment variable is missing or empty in .env.production. The install.sh deploy script auto-extracts this from supabase/.env in Step 4b, but it may be missing if:

- You deployed before this fix was added

supabase/.envdoesn't exist yet when the extraction runs

Solution:

cd /opt/ctms-deployment

SRK=$(grep '^SERVICE_ROLE_KEY=' supabase/.env | cut -d= -f2-)

sed -i "s|SUPABASE_SERVICE_ROLE_KEY=.*|SUPABASE_SERVICE_ROLE_KEY=$SRK|" .env.production .env

docker compose -f docker-compose.yml -f docker-compose.prod.yml --env-file .env.production up -d --force-recreate zynexa

Problem: External access blocked (Hetzner Cloud Firewall)

Symptoms:

- All services respond correctly when curled from inside the server (

curl localhost:3000) - External access from browser times out (HTTP 000 / connection refused)

- Server-level

iptables -LshowsINPUT ACCEPT— no local firewall blocking

Root Cause:

Cloud providers like Hetzner have a cloud-level firewall that is external to the VM. Even if the VM's own firewall is open, the cloud firewall blocks traffic before it reaches the server.

Solutions:

-

Hetzner Cloud Console: Go to Firewalls → select the firewall attached to your server → add inbound rules for TCP ports:

22, 80, 443, 3000, 3001, 4000, 5080, 8000, 8001, 8006, 8080, 9080from source0.0.0.0/0 -

hcloud CLI (if installed on server with API token):

hcloud firewall add-rule <firewall-id> --direction in --protocol tcp \

--port 3000 --source-ips 0.0.0.0/0 --source-ips ::/0

- Remove the firewall entirely (for dev/test environments): Hetzner Console → Firewalls → select → Actions → Delete

On AWS, the equivalent is Security Groups. Open the same ports in your EC2 instance's security group.

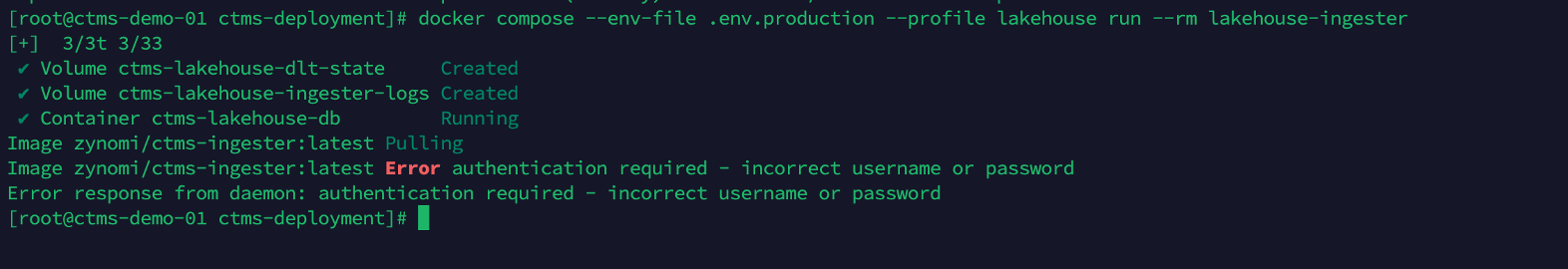

Problem: Docker image pull fails with "authentication required"

Symptoms:

- Running

docker compose --profile lakehouse run --rm lakehouse-ingester(or anydocker compose pull) fails with:Image zynomi/ctms-ingester:latest Pulling

Image zynomi/ctms-ingester:latest Error authentication required – incorrect username or password

Error response from daemon: authentication required – incorrect username or password - The image is public on Docker Hub, yet the pull still fails

Root Cause:

Docker caches credentials in ~/.docker/config.json after a docker login. If you changed your Docker Hub password (or the account was rotated) after the initial login on the server, Docker continues to send the stale cached credentials. Docker Hub rejects them, and because an authentication header was sent, it doesn't fall back to anonymous access — even for public images.

This commonly happens when:

zynctl.sh bootstraporensure_docker_login()randocker loginwith old credentials- Someone manually ran

docker loginduring initial setup and later changed the password - A CI/CD pipeline cached an expired token

Solution:

Log out of Docker Hub so pulls fall back to anonymous (which works for all public zynomi/* images):

docker logout

Then retry the command:

docker compose --profile lakehouse run --rm lakehouse-ingester

Alternative: If you need authenticated pulls (to avoid the 100 pulls / 6 hours anonymous rate limit), re-login with the correct credentials:

docker login -u <your-dockerhub-username>

# Enter the new password when prompted

You can confirm cached credentials exist by checking:

cat ~/.docker/config.json | grep -c auth

If the output is > 0, Docker will attempt authenticated pulls. Remove stale creds with docker logout or rm -f ~/.docker/config.json.

Bundle Deployment Issues (zynctl)

Problem: Docker Hub rate limit during deploy

Symptoms:

docker compose pullorzynctl.sh deployfails with:Error: You have reached your unauthenticated pull rate limit- Happens during Step 9 (Pull all CTMS images) or when running data pipeline

Root Cause:

Anonymous Docker Hub pulls are limited to 100 per 6 hours per IP. A full CTMS deployment pulls ~15 images — multiple deploy attempts or a shared server IP can exhaust this limit.

Solutions:

- Configure Docker Hub credentials in

zynctl.conf:

DOCKER_USERNAME=your-dockerhub-username

DOCKER_PASSWORD=your-dockerhub-password

- Or login manually and resume:

docker login -u <your-username>

./zynctl.sh resume-deploy

A free Docker Hub account raises the limit to 200 pulls / 6 hours. Sign up →

Problem: Frappe API token not auto-detected

Symptoms:

zynctl.sh deployprints:⚠️ Could not auto-detect Frappe API tokenenv-checkshowsFRAPPE_API_TOKENstill containsPLACEHOLDER:WILL_BE_REPLACED_AFTER_FRAPPE_SETUP

Root Cause:

The deploy script tries three methods to extract the Frappe API token (setup logs → generate_keys → separate bench extraction). All three may fail if Frappe backend is still initializing or the site creation hasn't completed.

Since bundle v2.30, zynctl.sh includes Step 6b which waits for the Frappe setup container (frappe-marley-health-setup-1) to fully exit before attempting token extraction. This eliminates the most common cause of this issue — reading logs before the token was generated. If you're on an older bundle version, upgrade to v2.30+ or apply the manual fix below.

Solution:

Generate the token manually and patch it:

# Generate token

docker exec frappe-marley-health-backend-1 bench --site frontend execute \

frappe.core.doctype.user.user.generate_keys --args "['Administrator']"

# Patch into env files

sed -i "s|FRAPPE_API_TOKEN=.*|FRAPPE_API_TOKEN=<api_key>:<api_secret>|" \

/opt/ctms-deployment/.env.production /opt/ctms-deployment/.env

# Recreate affected services

cd /opt/ctms-deployment

docker compose -f docker-compose.yml -f docker-compose.prod.yml \

--env-file .env.production up -d --force-recreate zynexa api-gateway

Problem: Docker commands fail after bootstrap (permission denied)

Symptoms:

docker psreturnspermission denied while trying to connect to the Docker daemon socket- Happens immediately after

./zynctl.sh bootstrap

Root Cause:

The bootstrap step adds your user to the docker group (usermod -aG docker), but the group membership only takes effect after a new login session.

Solution:

# Option 1: Re-login

exit

ssh root@<server-ip>

# Option 2: Activate group in current session

newgrp docker

./zynctl.sh full-deploy handles this automatically — if you use the step-by-step approach (bootstrap then deploy), always re-login between the two.

Problem: Health check shows ❌ but service actually works

Symptoms:

./zynctl.sh healthshows one or more services as ❌ (HTTP 000)- But

curl http://<server-ip>:<port>from an external machine works fine - Or the web UI loads correctly in the browser

Root Cause:

On Rocky Linux 10 and some RHEL 9 configurations, localhost resolves to IPv6 (::1) while Docker services only listen on IPv4 (0.0.0.0). The health check uses 127.0.0.1 to avoid this, but custom health-check scripts or Docker's built-in HEALTHCHECK may still use localhost.

Solution:

This is cosmetic — the services are healthy. The zynctl.sh health command already uses 127.0.0.1. If you see unhealthy in docker ps output for specific containers, you can override the health check:

# Verify the service is actually responding

curl -s http://127.0.0.1:3000/api/health

# If it works, the "unhealthy" status is a false negative from IPv6 resolution

Problem: ctms-init fails mid-way (e.g., Stage 4 — Items, or Stage 5 — Healthcare Practitioner)

Symptoms:

ctms-initcontainer exits with an error- Log shows

417 EXPECTATION FAILEDor500 Internal Server Erroron a specific stage - Subsequent stages did not run

Root Cause:

Frappe may intermittently reject requests during heavy provisioning (resource contention, worker timeouts). Common failure modes:

- Stage 4 — Items: The "Laboratory" or "Drug" Item Group didn't exist when Items were created. Fixed in ctms-init v1.11+ (bundle v2.30) which seeds Item Groups before Items.

- Stage 5 — Healthcare Practitioner: Gender "Female" or department "Clinical Trial" doesn't exist (setup wizard fixtures incomplete). Stage 4 now seeds Gender/Salutation as a safety net.

If you see failures that should be fixed in a newer version, Docker may be using a cached old image. Since bundle v2.31, zynctl.sh force-pulls ctms-init:latest before running. For older bundles, manually pull first:

docker pull zynomi/ctms-init:latest

Solution:

Re-run ctms-init — all 5 stages are idempotent (completed stages skip automatically):

cd /opt/ctms-deployment

docker compose -f docker-compose.yml -f docker-compose.prod.yml \

--env-file .env.production --profile init run --rm ctms-init

To run only specific stages:

CTMS_INIT_STAGES=4,5 docker compose -f docker-compose.yml -f docker-compose.prod.yml \

--env-file .env.production --profile init run --rm ctms-init

General Docker Issues

Problem: Docker container won't start

Solutions:

- Check container logs:

docker logs container-name

-

Verify Dockerfile syntax

-

Ensure all dependencies are installed

Logging and Monitoring

Enable Debug Logging

Add to your environment:

DEBUG=true

LOG_LEVEL=debug

View Logs

Docker:

docker logs -f container-name

Health Checks

API Health Check

curl https://api.your-domain.com/__health

Database Health Check

curl https://your-project.supabase.co/rest/v1/ \

-H "apikey: YOUR_ANON_KEY"

Frappe Health Check

curl https://your-site.frappe.cloud/api/method/ping

Getting Help

If you're still experiencing issues:

- Check the project issue tracker

- Review the API Reference documentation

- Contact support at contact@zynomi.com